Everyone, meet Kjersti. She is the outdoor action type and loves kayaking, off-road biking and skiing. Metal-edge skis, of course.

But in the office at ScoutDI headquarters in Trondheim Norway, she likes to ponder challenges of indoor drone software systems. Downhill racing fades to a faint memory and climbing the autonomy ladder becomes a more attractive pastime.

In her master thesis, Kjersti investigated different ways of processing EEG data. The purpose was to create a Brain-Computer Interface (BCI) capable of classifying imagined body movements and convert them into drone control commands. In real-time.

— Drone control and indoor drone autonomy are definitely very complex and interesting subjects, Kjersti says.

Kjersti Brynestad

Autonomy and usability

A basic, six-level ladder is commonly used to describe any vehicle’s journey towards full autonomy. In the case of a drone, level zero is simply a pilot flying a drone. Only at level five can a drone fly autonomously in any environment under all conditions. This is the holy grail for any drone developer. But autonomy is not a one-size-fits-all. It is meticulously built layer by layer and tailored to the specific application. Not just in terms of the technology but also in terms of man-machine interface and gradually educating the operator.

-We need keep an eye on what level of autonomy the drone pilot needs, expects or wants for their application. The drone and the operator must work well together. It’s perfectly possible to build something that either doesn’t work 100% or mainly confuses the operator. Often, there are better ways to assist than by removing control.

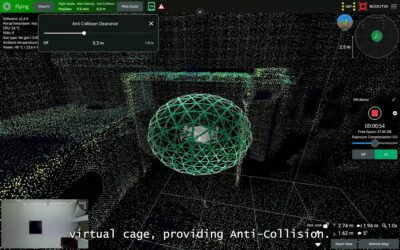

So making the drone safe and easy to use in any application and situation, is the overarching most important job for an autonomy engineer. The Scout 137 drone presents several implementations of autonomy functions that support the operator to experience easy and stable flying. Like the collision avoidance features and the position-keeping demonstrated in this story.

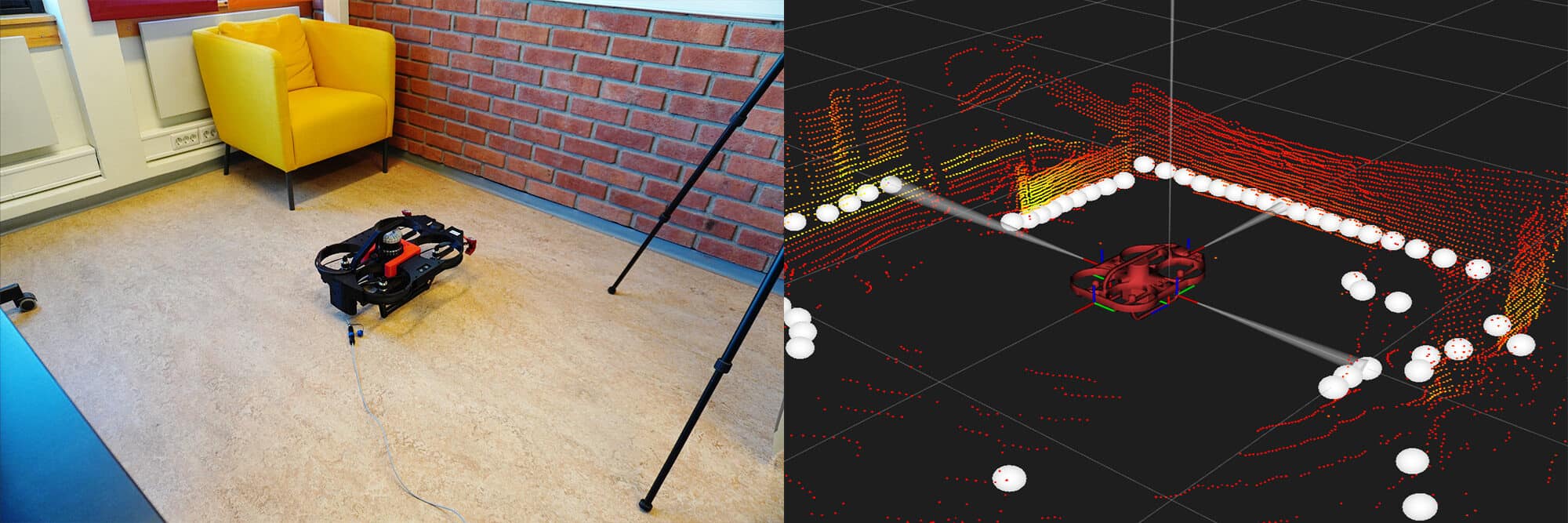

Obstacle detection, collision avoidance and robust positioning are important aspects of our drone’s autonomy. The Scout 137 drone has an on-board LiDAR (Light Detection and Ranging) providing the raw data that powers these properties. In fact, this essential part not only enables robust autonomy features, but also supports the operator both during and after the inspection.

Confined space inspection

It is in many cases important to be able to easily fly into confined spaces and allow the operator to stay completely outside of the inspection target throughout the job. While the drone is navigating and maneuvering on the LiDAR data, the system also uses it to generate a 3D map of the inspection target interior in real-time. This 3D map provides great situational awareness to the operator during flight.

– The 3D map is super-important to the operator. Without that, you would have to rely on the camera feed or line-of-sight to know anything about the drone’s position and heading.

The 3D map is shown in a split-screen view on the pilot’s navigation tablet, next to the live camera feed. It provides the visual aid needed to easily navigate inside the inspection target and do the job. It makes no difference if the interior is pitch dark, since the LiDAR operates equally well in complete darkness and bright daylight. Plus: With the LiDAR you can also see what’s behind the drone and next to it.

– So the LiDAR data is used both for autonomous drone positioning and to provide visual situation awareness to the drone operator.

Kjersti Brynestad, ScoutDI autonomy engineer

It’s a very interesting concept. The goal is of course that the operator can perform the inspection without entering the confined space at all, but can rely entirely on this system.

The operator remains in charge and the drone stays clear of all obstacles and helps get the job done. While also maintaining the safety of personnel and equipment. Including itself.

If you’d like to read more about life in ScoutDI and the autonomy of the Scout 137 drone, and watch a cool drone-fencing video, you can click here: Malshan, the drone fencer.